Data Center Cooling Guide: Thermal Management for AI-Driven High-Density Computing

Data Center Cooling Guide: Thermal Management for AI-Driven High-Density Computing

As artificial intelligence workloads push hardware to unprecedented limits, thermal management has become the primary bottleneck for modern data centers. This comprehensive guide explores cutting-edge cooling strategies, compares air vs. liquid cooling technologies, and demonstrates how MEGA Tech's industrial fan solutions eliminate thermal throttling while maximizing infrastructure ROI.

1. Introduction: The Critical Role of Thermal Management

The AI Revolution's Thermal Challenge

The explosion of AI-driven applications—from large language models to real-time video rendering—has fundamentally transformed data center thermal dynamics. In 2026, AI data centers represent a market projected to grow from $236 billion to $933 billion by 2030, a 4x increase driven by insatiable demand for high-density computing power.

The Critical Numbers: - Power demand surge: U.S. AI data centers will consume 123 GW by 2035, up from 4 GW in 2024—a 30x increase - Energy consumption: Global data center electricity use will grow 50% by 2027 and 165% by 2030 - Cooling costs: Thermal management systems account for 40% of total data center energy consumption

Why Thermal Management Determines Success or Failure

Inadequate cooling doesn't just increase energy bills—it directly threatens business operations:

| Consequence | Impact | Cost |

|---|---|---|

| Thermal Throttling | CPUs/GPUs automatically downclock when overheated | 30-50% performance loss |

| Hardware Failure | Components exceed thermal limits, leading to premature death | $50,000-$500,000 per incident |

| Downtime | Critical systems go offline during thermal events | $5,600-$9,000 per minute |

| Energy Waste | Inefficient cooling draws excessive power | 20-40% higher PUE |

The Business Case: Investing in precision cooling solutions delivers measurable ROI through: - ✅ Unleashing 100% hardware computing capacity - ✅ Extending component lifespan (MTBF) by years - ✅ Reducing energy costs through intelligent thermal optimization - ✅ Eliminating thermal throttling-induced delays

2. 2026 Data Center Cooling Trends: What's Changing

Trend 1: AI-Driven Compute Density Explosion

Traditional data centers designed for 5-10 kW per rack now face demands for 20-40 kW, driven by: - Multi-GPU arrays for AI training (4-8 GPUs per server) - High-frequency trading platforms requiring ultra-low latency - Real-time rendering farms for 3D animation and VFX

The Challenge: Standard 25mm-thick fans cannot generate sufficient static pressure to force air through dense heatsink arrays, cables, and storage drives. Result: localized "hot spots" that trigger thermal throttling.

Trend 2: Energy Efficiency as a Strategic Constraint

Power availability has become the #1 bottleneck for data center expansion: - Utility grids struggle to meet demand - Sustainability requirements mandate measurable ESG progress - PUE (Power Usage Effectiveness) targets tighten annually

2026 Target PUE: < 1.4 (vs. industry average of 1.58)

Trend 3: Supply Chain Redesign for Cooling Components

Global shortages of specialized cooling fans, heatsinks, and thermal interface materials have forced operators to: - Diversify supplier portfolios - Build strategic inventory reserves - Prioritize partners with proven reliability and customization capabilities

Trend 4: Specialized Cooling Becomes Competitive Advantage

The "one-size-fits-all" approach is dead. Leading operators now deploy zoned cooling architectures: - High-static pressure fans for CPU/GPU clusters - Low-vibration fans for storage arrays - High-airflow exhaust fans for rack-level thermal extraction

Trend 5: Sustainability From Initiative to Mandate

Investors, regulators, and enterprise clients now require: - Documented energy efficiency metrics - Carbon footprint reporting - Water usage accountability (for evaporative cooling systems)

3. Cooling Technologies Comparison: Air vs. Liquid

3.1 Traditional Air Cooling

How It Works: Fans force ambient air through heatsinks attached to hot components, carrying heat away via forced convection.

Advantages: - ✅ Simple installation and maintenance - ✅ Lower upfront capital costs - ✅ Proven reliability (decades of deployment) - ✅ Easy troubleshooting and component replacement

Limitations: - ❌ Limited cooling capacity for high-density racks (>20 kW) - ❌ Higher PUE in hot climates - ❌ Noise levels at high fan speeds

Best For: Racks up to 15-20 kW, standard server configurations, cost-sensitive deployments

3.2 Liquid Cooling Technologies

Direct-to-Chip Liquid Cooling: Coolant flows through cold plates directly attached to CPUs/GPUs.

Advantages: - ✅ Superior heat transfer efficiency - ✅ Handles extreme densities (40-100 kW per rack) - ✅ Reduced fan noise - ✅ Lower PUE in high-density environments

Limitations: - ❌ Higher capital costs ($2,000-$5,000 per rack) - ❌ Complex installation and maintenance - ❌ Risk of leaks causing catastrophic damage - ❌ Requires specialized facility infrastructure

Rear-Door Heat Exchangers: Water-cooled doors replace standard rack doors.

Advantages: - ✅ Retrofittable to existing racks - ✅ Handles 15-30 kW per rack - ✅ Minimal facility modifications

Limitations: - ❌ Requires chilled water supply - ❌ Plumbing complexity - ❌ Lower efficiency than direct-to-chip

3.3 Hybrid Cooling: The Optimal Balance

Strategy: Deploy liquid cooling for extreme-density zones (GPU clusters) while using optimized air cooling for standard compute and storage arrays.

Why It Works: - 💰 Cost-effective: Concentrates expensive liquid infrastructure where it matters most - 🔧 Flexible: Allows phased migration to liquid cooling - ⚡ Efficient: Right-sizes cooling investment to actual thermal loads

Best For: Mixed-workload data centers, AI training clusters with standard supporting infrastructure

4. MEGA Tech Data Center Cooling Solutions

At MEGA Technology, we engineer targeted cooling solutions designed to resolve specific rack-level thermal challenges. We don't believe in "one-size-fits-all"—instead, we provide specialized fan architectures tailored to distinct structural zones within the server rack.

4.1 The Heavyweight: MEGA Tech 12038 Series (For Extreme Density)

Target Application: 4U GPU SuperServers, high-density ASIC arrays, AI training clusters

The Problem: - Extreme internal air resistance from dense heatsinks, tightly packed cables, and multiple GPUs - Standard 25mm-thick fans cannot generate sufficient static pressure - Result: Severe thermal throttling, system crashes, 30-50% performance loss

The Solution: The 12038's 38mm thickness enables a larger motor and steeper blade pitch, generating massive static pressure. This acts as a powerful engine, forcing cold air through extreme resistance barriers—completely eliminating localized hot spots.

Technical Specifications:

| Parameter | Value | Competitive Advantage |

|---|---|---|

| Dimensions | 120×120×38mm | 38mm thickness = 50% more pressure than 25mm fans |

| Airflow | 63.9-246.6 CFM | Highest in class for rack cooling |

| Static Pressure | Up to 6.44 mmH₂O | Penetrates dense heatsink arrays |

| Speed Range | 1,800-5,800 RPM | PWM-controlled for intelligent thermal management |

| Bearing Type | Dual Ball Bearing | 70,000+ hours MTBF at 25°C |

| Voltage | 12V / 24V DC | Flexible power supply compatibility |

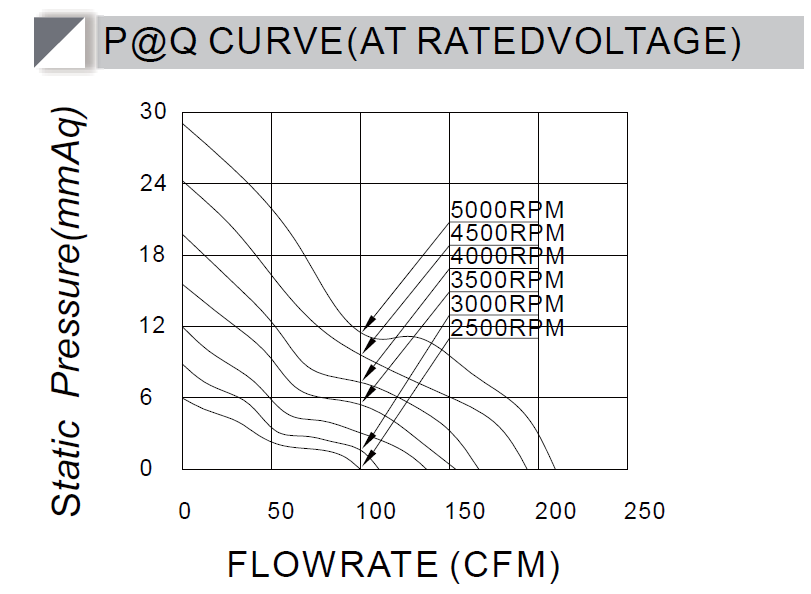

P-Q Performance Curve:

The 12038's exceptional static pressure enables airflow through dense obstacles where standard fans fail.

Real-World Application:

Case Study: European Cloud Rendering Farm

The Client: A top European cloud rendering farm supplying high-density computing power to Hollywood-grade 3D animation and VFX studios.

The Challenge: - 4U high-density compute racks with next-generation high-power GPUs - Standard cooling fans couldn't penetrate dense heatsink arrays - GPUs hitting 90°C+ at full load, triggering thermal throttling - Rendering tasks estimated at 10 hours dragged to 15 hours - Client deliverables delayed, electricity bills soaring

The Solution: - Complete upgrade to MEGA Tech 12038 industrial fans - "Fan Wall" matrix installation: 4-6 units side-by-side at rack front - High-precision PWM intelligent speed control - Top-tier dual ball bearings for 24/7 reliability

The Results (after 1 week):

| Metric | Before | After | Improvement |

|---|---|---|---|

| GPU Core Temp | 95°C+ | 70°C | -25°C |

| Thermal Throttling | Frequent | Eliminated | 100% resolved |

| Rendering Efficiency | Baseline | +30% | Faster delivery |

| Downtime (6 months) | Multiple events | Zero | 100% uptime |

Client Testimonial:

"MEGA Technology isn't just selling fans; they are delivering computing reliability. The terrifying static pressure of the 12038 series is the most solid line of defense in our entire data center. Thanks to MEGA Tech, we no longer sweat over server temperature gauges during the summer."

— Thomas K., Chief Infrastructure Officer

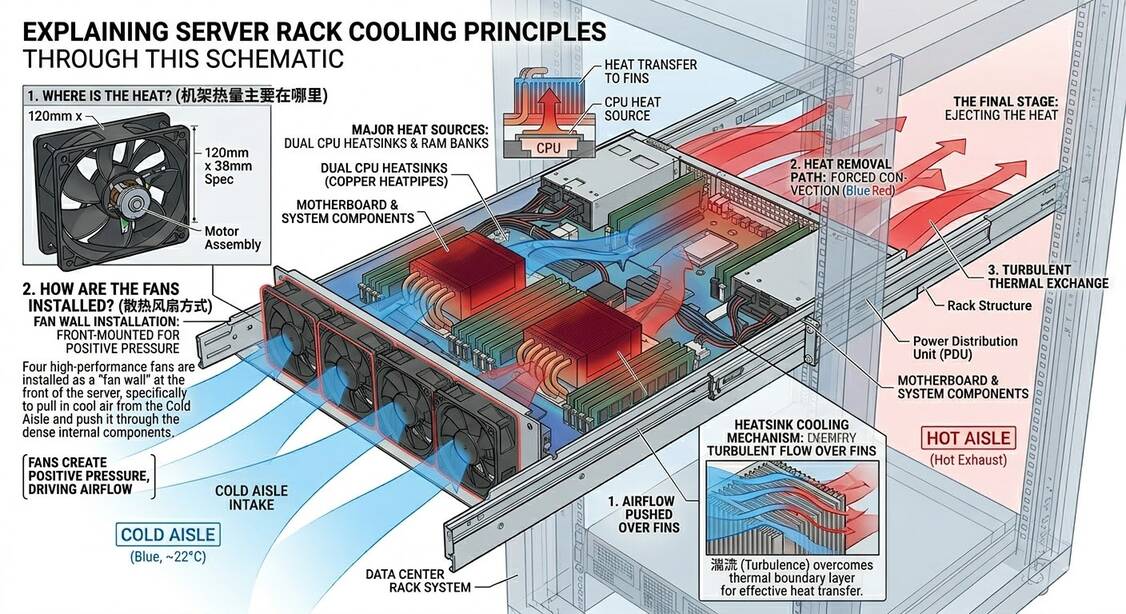

12038 fans installed in a 4U GPU server rack, creating a high-pressure airflow barrier.

Thermal pathway: Cold aisle air (blue) forced through dense heatsinks by 12038 fans, expelling hot air (red) to hot aisle.

4.2 The Balanced Performer: MEGA Tech 8025 Series (For 2U Storage & Networking)

Target Application: 2U Enterprise NAS, Storage Servers, Core Switches, UPS Systems

The Problem: - Sensitive mechanical hard drives (HDDs) require stable temperatures but are vulnerable to vibration damage - Standard fans either provide inadequate cooling OR generate excessive vibration - Result: Data corruption risks, premature HDD failure, system instability

The Solution: The 8025 series delivers a perfect balance of high CFM and low vibration, rapidly exhausting heat while operating smoothly. This protects sensitive mechanical components from resonance damage, ensuring data integrity while maintaining optimal operating temperatures.

Technical Specifications:

| Parameter | Value | Why It Matters |

|---|---|---|

| Dimensions | 80×80×25mm | Standard 2U rack compatibility |

| Airflow | 24.4-126.5 CFM | Sufficient for storage array cooling |

| Static Pressure | 1.9-11.4 mmH₂O | Overcomes moderate airflow resistance |

| Speed Range | 2,500-6,500 RPM | PWM control for noise optimization |

| Bearing Options | Sleeve / Ball / Hydraulic | Choose based on installation orientation |

| Noise Level | 25-45 dBA | Quiet operation for office environments |

| MTBF | 40,000-70,000 hours | Years of maintenance-free operation |

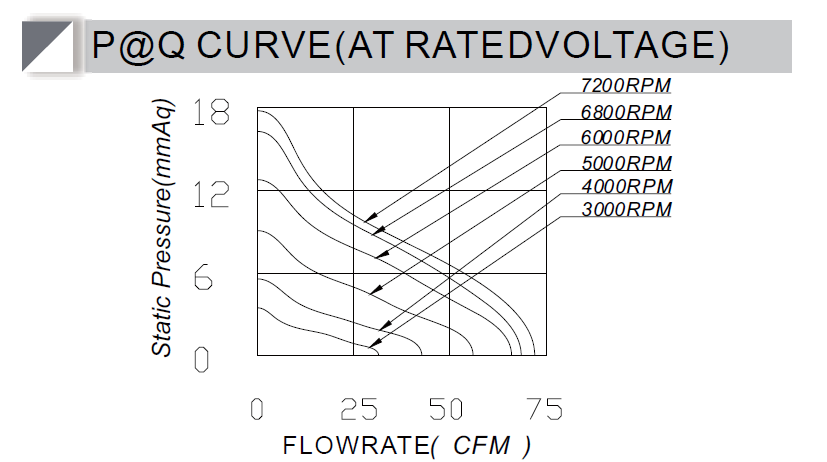

P-Q Performance Curve:

Balanced airflow and pressure curve optimized for storage and networking equipment.

Why Choose 8025 for Storage Arrays: - ✅ Low vibration design protects HDD platters from resonance damage - ✅ Optimized airflow maintains 35-40°C HDD operating temperature - ✅ Quiet operation suitable for office-adjacent deployments - ✅ Long lifespan reduces maintenance overhead

MEGA Tech 8025 industrial fan—precision-engineered for storage and networking thermal management.

8025 fans installed in a 2U storage server, providing balanced cooling with minimal vibration.

4.3 The Airflow Maximizer: MEGA Tech 12025 Series (For Rack-Level Exhaust)

Target Application: Top-of-rack fan trays, edge computing nodes, general-purpose cooling

The Problem: - Heat accumulates at rack tops and in enclosed cabinets - Inadequate exhaust capacity leads to heat recirculation - Result: Rising ambient temperatures, reduced cooling efficiency, hot spots

The Solution: Designed with an exceptional airflow-to-noise ratio, the 12025 series acts as the primary exhaust mechanism for the entire cabinet. It efficiently pulls accumulated hot air out of the rack and directs it into the facility's return plenum, preventing heat recirculation.

Technical Specifications:

| Parameter | Value | Application Benefit |

|---|---|---|

| Dimensions | 120×120×25mm | Standard rack fan tray fit |

| Airflow | 64.2-151.7 CFM | High-volume exhaust capability |

| Static Pressure | 2.1-5.8 mmH₂O | Sufficient for moderate resistance |

| Speed Range | 1,820-3,600 RPM | Wide range for thermal optimization |

| Noise Level | 22-38 dBA | Quiet operation for noise-sensitive environments |

| MTBF | 70,000 hours | 8+ years of continuous operation |

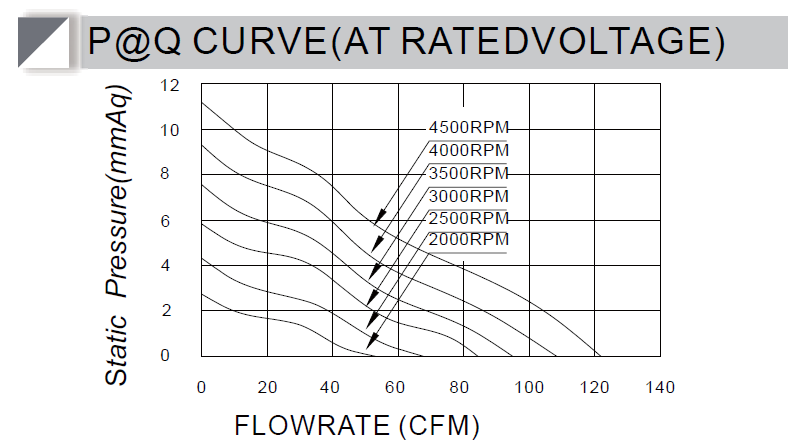

P-Q Performance Curve:

High airflow capacity optimized for rack-level exhaust applications.

Strategic Deployment: - 📍 Top-of-rack trays: 2-4 units to exhaust rising hot air - 📍 Edge computing nodes: Compact cooling for constrained spaces - 📍 Network switches: Maintain switch temperatures without overwhelming noise

Why 12025 Excels at Rack Exhaust: - ✅ Maximum airflow clears hot air from cabinet tops - ✅ Low noise won't disrupt data center operations - ✅ Energy efficient PWM control reduces power during low-load periods - ✅ Long lifespan ensures years of reliable operation

MEGA Tech 12025 industrial fan—the workhorse for rack-level thermal extraction.

12025 fans mounted in a server chassis, providing efficient thermal extraction.

4.4 Product Comparison Matrix

| Feature | 12038 Series | 8025 Series | 12025 Series |

|---|---|---|---|

| Best For | Extreme density (GPU clusters) | Storage & networking | Rack exhaust & edge |

| Thickness | 38mm | 25mm | 25mm |

| Max Airflow | 246.6 CFM | 126.5 CFM | 151.7 CFM |

| Max Static Pressure | 6.44 mmH₂O | 11.4 mmH₂O | 5.8 mmH₂O |

| Vibration Level | Moderate | Low | Low |

| Noise (Max) | 45-65 dBA | 25-45 dBA | 22-38 dBA |

| MTBF | 70,000 hrs | 40,000-70,000 hrs | 70,000 hrs |

| Voltage Options | 12V / 24V | 12V / 24V | 12V / 24V |

5. Implementation Guide: Deploying MEGA Tech Cooling Solutions

5.1 Step 1: Assess Your Current Thermal Profile

Tools Required: - Infrared thermal camera or temperature sensors - Airflow measurement device - Power monitoring equipment

What to Measure: 1. Hot spot identification: Map temperatures across rack components 2. Airflow bottlenecks: Identify restricted airflow paths 3. Current PUE: Establish baseline energy efficiency 4. Fan health: Check existing fan RPM, noise, and vibration levels

Warning Signs You Need Better Cooling: - ⚠️ Component temperatures exceeding 80°C under load - ⚠️ Thermal throttling events in system logs - ⚠️ Fan speeds consistently at 80%+ capacity - ⚠️ Uneven temperature distribution across rack

5.2 Step 2: Select the Right Fan Architecture

Decision Framework:

Is your rack density > 15 kW?

├─ YES → Deploy 12038 series for primary cooling

│ (Consider hybrid liquid cooling for > 30 kW)

│

└─ NO → Does rack contain mechanical HDDs?

├─ YES → Use 8025 series (low vibration)

│

└─ NO → Standard 12025 series sufficient

Configuration Examples:

Example A: AI Training Cluster (4U GPU Server) - Primary cooling: 6× 12038 fans (front fan wall) - Exhaust: 2× 12025 fans (rear) - Result: Handles 25-35 kW per rack

Example B: Enterprise Storage Array (2U NAS) - Cooling: 4× 8025 fans (side intake) - Exhaust: 2× 8025 fans (rear) - Result: Maintains 35-40°C HDD temps with minimal vibration

Example C: Standard Compute Rack (42U) - Top-of-rack exhaust: 4× 12025 fans - Per-server: 2× 12025 fans each - Result: Handles 8-12 kW per rack efficiently

5.3 Step 3: Installation Best Practices

Critical Installation Guidelines:

- Airflow Direction: Ensure fans push air from cold aisle to hot aisle

- Intake: Cold aisle side (typically 18-22°C)

-

Exhaust: Hot aisle side (typically 30-40°C)

-

Fan Spacing: Maintain 1U (1.75") clearance between fan arrays and obstacles

-

Cable Management: Route cables to minimize airflow blockage

- Use cable arms and vertical managers

-

Bundle cables away from primary airflow paths

-

Vibration Isolation: For storage arrays, use rubber fan mounts to eliminate vibration transfer to HDDs

-

PWM Control Setup:

- Connect PWM wires to motherboard fan headers

- Configure BIOS/software to adjust fan speeds based on temperature sensors

- Set minimum speed to prevent bearing damage during idle

5.4 Step 4: Optimize and Monitor

Key Metrics to Track:

| Metric | Target | Action if Exceeded |

|---|---|---|

| Component Temp | < 80°C under load | Add fans, improve airflow |

| PUE | < 1.4 | Optimize fan speeds, check for air leaks |

| Fan RPM | 40-70% at typical load | Fan oversized—consider smaller model |

| Noise Level | < 55 dBA in occupied areas | Reduce fan speeds, add sound dampening |

Continuous Improvement: - Review thermal logs monthly - Adjust PWM curves seasonally (summer/winter ambient differences) - Replace fans approaching MTBF limits (typically 60,000 hours)

6. The MEGA Technology Advantage: Why Leading Data Centers Choose Us

6.1 Technical Excellence

Engineering Expertise: 20+ years of thermal management experience in industrial cooling applications

Rigorous Testing: Every fan undergoes: - 48-hour burn-in testing at maximum load - Vibration analysis for bearing quality - Acoustic testing for noise certification - Environmental testing (temperature, humidity, dust)

Customization Capability: - Custom fan curves optimized for your specific airflow resistance - Private label options for OEM partners - Special voltage, connector, and wire length configurations - Rapid prototyping (2-4 weeks for custom designs)

6.2 Quality Assurance

Certifications: - ✅ CE (European Conformity) - ✅ UL/cUL (Underwriters Laboratories) - ✅ TUV (German safety certification) - ✅ RoHS (Restriction of Hazardous Substances) - ✅ REACH (EU chemical regulation compliance)

Reliability Standards: - Industrial-grade components rated for 24/7 operation - Dual ball bearings for maximum lifespan - MTBF: 40,000-70,000 hours (depending on model) - Operating temperature: -10°C to +70°C

6.3 Customer ROI

Quantified Benefits:

| Benefit | Typical Result | Dollar Value |

|---|---|---|

| Eliminated Thermal Throttling | +30% compute performance | Higher revenue per server |

| Extended Hardware Lifespan | +2-3 years MTBF | $10,000-$50,000 per rack |

| Reduced Energy Costs | -15-25% cooling power | $5,000-$15,000 annually per rack |

| Zero Downtime | No thermal-related outages | Prevents $300,000+ losses per incident |

7. Future Outlook: The Evolution of Data Center Cooling

7.1 AI-Optimized Thermal Management

Emerging Technology: Machine learning algorithms that: - Predict thermal loads based on workload patterns - Dynamically adjust fan speeds across entire data centers - Optimize cooling for minimum energy consumption - Detect anomalies indicating impending fan failures

MEGA Tech Investment: We're developing smart fan controllers with built-in AI capabilities for predictive thermal management.

7.2 Sustainability Integration

2026-2030 Trends: - Liquid cooling adoption will grow 300% for high-density applications - Waste heat recovery systems will become standard - Data centers will target PUE < 1.2 - Water-free cooling technologies will gain prominence

MEGA Tech Commitment: - Energy-efficient fan designs reducing power consumption by 20-30% - RoHS-compliant materials throughout product line - Partnerships with sustainability-focused facility designers

7.3 Edge Computing Thermal Challenges

The New Frontier: Edge deployments in harsh environments (outdoor cabinets, factory floors, remote locations) require: - Wider operating temperature ranges (-20°C to +70°C) - Dust and moisture protection (IP54-IP68 ratings) - Fanless cooling options for noise-sensitive environments

MEGA Tech Solution: Our ruggedized fan series with IP68 protection and extended temperature ranges.

8. Get Started with MEGA Technology

8.1 Technical Consultation

Free Thermal Assessment: Our engineering team will: - Analyze your current cooling infrastructure - Identify thermal bottlenecks and optimization opportunities - Recommend optimal fan configurations - Provide ROI projections for upgrades

8.2 Product Availability

Lead Times: - Standard models (12025, 8025, 12038): 1-2 weeks - Custom configurations: 2-4 weeks - Large orders (>10,000 units): 4-6 weeks

Minimum Order Quantities: - Standard models: 100 units - Custom models: 500 units

8.3 Contact Us

MEGA Technology Co., Ltd. - 📧 Email: [email protected] - 📞 Phone: +86-13570567086 - 🌐 Website: cnmegatech.com - 📍 Headquarters: Shenzhen, Guangdong, China

Get a Quote: Contact us for pricing, technical specifications, and custom solutions.

Conclusion: Precision Cooling for the AI Era

As AI workloads continue to push hardware to its limits, thermal management has evolved from a support function to a strategic competitive advantage. Data centers that master precision cooling will:

- ✅ Maximize hardware performance without thermal throttling

- ✅ Extend infrastructure lifespan, protecting capital investments

- ✅ Reduce energy costs through optimized thermal efficiency

- ✅ Achieve sustainability targets for ESG compliance

- ✅ Eliminate thermal-related downtime

MEGA Technology's targeted cooling matrix—combining the 12038 heavyweight, 8025 balanced performer, and 12025 airflow maximizer—provides the precision, reliability, and ROI that modern data centers demand.

Don't let thermal management become your bottleneck. Contact MEGA Technology today and discover how our industrial cooling solutions can transform your data center's performance, efficiency, and reliability.

Tags: #DataCenterCooling #AIThermalManagement #12038Fan #8025Fan #12025Fan #HighDensityComputing #PUEOptimization #ThermalThrottling #IndustrialCooling #ServerCooling

Related Articles: - AI Server Cooling Guide - Industrial Thermal Management Solutions - DC Cooling Fan Selection Guide

Last Updated: March 26, 2026

💬 Discussions & Feedback

Feel free to ask questions, share your thoughts, or provide feedback in the comments below. If you have any questions about our products or suggestions, please let us know!